The Eight Stages of AI-Assisted Software Development Mastery

Artificial intelligence’s programming capabilities are advancing faster than developers can effectively utilize them. This explains why impressive SWE-bench benchmarks don’t translate to the productivity improvements engineering managers expect. While one team successfully deploys a product like Cowork in just ten days using advanced AI models, another team struggles with a broken proof-of-concept using identical technology. The difference lies in bridging the gap between AI capability and practical application.

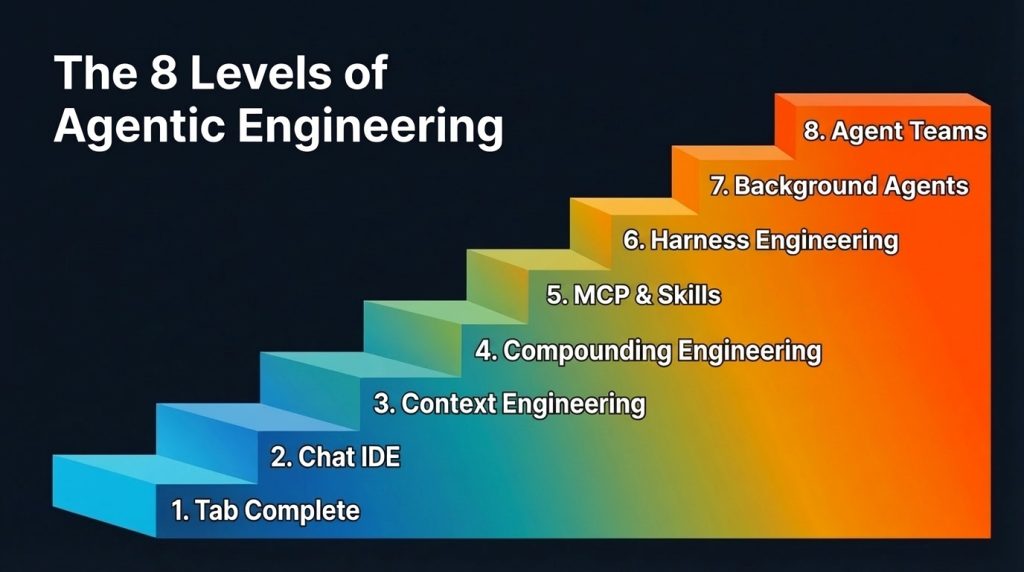

This progression occurs through eight distinct stages of mastery. Most developers have progressed beyond the initial phases and should focus on reaching subsequent levels, as each advancement dramatically increases output and amplifies the benefits of model improvements.

Understanding these levels matters because of the collaborative multiplier effect. Your productivity depends significantly on your teammates’ proficiency levels. Even a highly skilled developer using background agents to generate pull requests overnight will face bottlenecks if colleagues are still manually reviewing code at basic levels.

Initial Stages: Tab Completion and Enhanced IDEs

The first two levels represent the foundation of AI-assisted coding. Tab completion emerged with GitHub Copilot, allowing developers to autocomplete code segments. This approach favored experienced programmers who could effectively outline their code structure before AI filled in the details.

AI-powered integrated development environments like Cursor revolutionized the process by connecting chat interfaces to entire codebases, dramatically simplifying multi-file modifications. However, context limitations remained a significant constraint, as models could only assist with visible information and often struggled with either insufficient or excessive context.

Developers at this stage typically experiment with plan mode in their preferred coding agents, converting rough concepts into structured implementation plans for language models. While effective for maintaining control, this approach becomes less necessary at advanced levels.

Context Engineering Mastery

The third level focuses on context engineering, which became prominent as models improved at following instructions with precisely calibrated context amounts. The challenge involves optimizing information density while avoiding both noisy and underspecified context.

Context engineering encompasses system prompts, rules files, tool descriptions, conversation history management, and strategic tool exposure. Models read tool descriptions to determine usage, making clear descriptions crucial for effective function calling.

While modern models handle messier contexts better due to larger context windows, context engineering remains relevant for specific scenarios including smaller models used in voice applications, token-intensive tools like web automation frameworks, and agents with extensive tool access.

The focus has evolved from filtering poor context to ensuring appropriate context availability at optimal timing, setting the foundation for the next advancement level.

Building Compound Learning Systems

Level four introduces compounding engineering, which improves all future sessions rather than just current ones. This approach follows a plan-delegate-assess-codify cycle, where developers plan tasks with sufficient context, delegate to language models, evaluate outputs, and crucially, codify learned lessons.

The codification step prevents stateless language models from repeating previous mistakes. Common implementation involves updating rules files, though excessive instructions can prove counterproductive. Better approaches include maintaining discoverable context through organized documentation folders.

Practitioners develop intuition for identifying missing context rather than blaming model competence when errors occur, enabling progression to advanced levels.

Expanding Capabilities Through Tools and Protocols

The fifth level addresses capability expansion through Model Context Protocol (MCP) implementations and custom skills, providing language models access to databases, APIs, continuous integration pipelines, design systems, and testing frameworks.

Advanced practitioners develop sophisticated review skills with conditional subagents handling different aspects like database integration safety, complexity analysis, and prompt formatting validation. This automation becomes essential as agents generate high-volume pull requests, shifting human review from quality gating to throughput bottlenecks.

Many teams consolidate individual skills into shared registries, treating skills like code with pull requests, reviews, and version control. Command-line interfaces are increasingly preferred over MCP servers for token efficiency, as they inject only relevant output rather than full tool schemas into context windows.

Automated Feedback and Environment Engineering

Level six introduces harness engineering, creating comprehensive environments and feedback loops enabling reliable autonomous agent work. This extends beyond code editing to include complete development environments with integrated feedback mechanisms.

Advanced implementations integrate browser development tools, observability systems, and user interface navigation into agent runtimes, allowing agents to reproduce bugs, record demonstrations, implement fixes, validate changes, and manage the entire development lifecycle with minimal human intervention.

Key principles include designing for throughput over perfection, allowing small non-blocking errors while maintaining final quality control, and favoring constraints over detailed instructions. Modern agents respond better to boundary definitions and test-driven objectives than step-by-step checklists.

Backpressure mechanisms through automated feedback systems, type checking, testing, and security boundaries enable true autonomy while preventing quality degradation.

Background Agent Operations

The seventh level represents a fundamental shift toward autonomous background processing. As models improve their one-shot success rates, traditional plan mode becomes less necessary for experienced practitioners with well-established context and feedback systems.

Background agents enable asynchronous work execution while developers focus on other tasks. Popular implementations include autonomous coding loops that iterate until requirements completion, though these require precise specification to avoid compounding errors.

Orchestration becomes crucial when managing multiple parallel agents. Effective orchestrators handle task dispatch, progress tracking, and issue escalation while preserving clean context windows for strategic oversight. Different approaches suit different needs, from local rapid development tools to cloud-hosted sandboxed environments for long-running autonomous work.

Strategic model selection enhances output quality, with different language models handling implementation, research, and review tasks based on their specific strengths. Separating implementers from reviewers prevents bias and improves evaluation accuracy.

Coordinated Agent Teams

The eighth level represents the current frontier where multiple agents coordinate directly without central orchestration. Early implementations demonstrate parallel agent teams working on shared codebases with direct inter-agent communication.

Experimental results show both promise and challenges. Large-scale projects like compiler development and browser construction demonstrate feasibility, but coordination difficulties emerge including risk-averse behavior, progress stagnation, and functionality conflicts without proper hierarchy and continuous integration safeguards.

Current model limitations in speed, token efficiency, and coordination suggest that level seven remains the practical focus for most development work, though level eight may eventually become standard as technology matures.

Future Directions

Beyond current capabilities, voice-driven interactions with coding agents represent a natural evolution, enabling conversational development where developers describe changes verbally and observe real-time implementation.

The pursuit of perfect one-shot development misses the fundamental iterative nature of software creation. Human requirements evolve through the development process, suggesting that enhanced interaction methods and increased speed will characterize future advances rather than elimination of iteration.

Understanding your current level and focusing on systematic progression through these stages provides the most effective path to leveraging AI-assisted development capabilities.